RLM: How to Process 10x More Context Without Losing Quality

Imagine you have a lengthy legal document, or a massive codebase, and for some reason you need the LLM to fully understand its contents. If you use ChatGPT, you already know what happens when you try to dump too much information into the chat at once: it simply won’t allow it. Even if you split the document into smaller parts, the LLM may still struggle to connect the dots between those parts due to context limitations.

Let’s say you managed to paste everything you wanted into ChatGPT and asked a question about something near the beginning of the text. The LLM probably won’t answer correctly because that initial section has already fallen out of context — a phenomenon known as context rot.

There are some techniques to work around these limitations, like RAG (Retrieval-Augmented Generation) and context compaction. But recently, the paper “Recursive Language Models” introduced an interesting and straightforward approach to implement, with no performance degradation even with 10M+ tokens in context.

What is Context Rot?

Before diving into the solution, it’s worth understanding the problem. Context rot is the phenomenon where an LLM’s response quality degrades as the context gets longer, even when it technically still fits within the model’s context window.

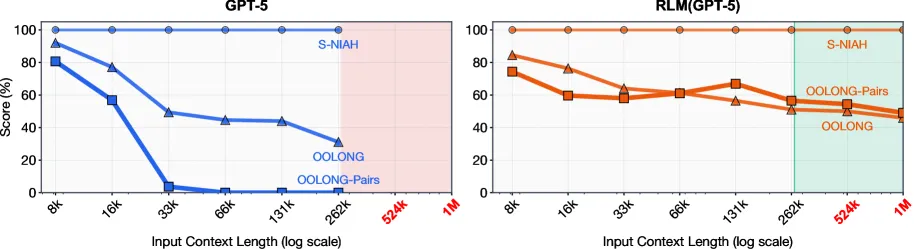

The paper demonstrates this clearly in Figure 1:

GPT-5, even with a 272K token context window, shows drastically reduced performance on tasks requiring dense information processing. And the more complex the task, the faster this degradation occurs.

GPT-5, even with a 272K token context window, shows drastically reduced performance on tasks requiring dense information processing. And the more complex the task, the faster this degradation occurs.

Recursion in LLMs

The idea of recursion in LLMs has been explored for some time, particularly in agents that use LLMs for decision-making and solving complex problems. When we use ChatGPT and see it “thinking” (Chain-of-Thought) or asking itself questions to arrive at an answer (Self-Reflection / Self-Critique), we’re witnessing recursion in action. The LLM is essentially self-consulting to refine its response.

All of this is done to produce better answers through an iterative process. But what this paper proposes is different: using recursion to expand the LLM’s effective context, allowing it to “remember” more information than the token limit would normally permit.

The Core Idea Behind RLM

The insight behind RLM is simple but powerful: the context should not be fed directly into the neural network, but instead treated as part of an external environment that the LLM can interact with programmatically.

In practice, the implementation works like this:

- The context becomes a variable in a REPL environment (Python)

- The LLM writes code to examine and decompose that context

- The LLM calls itself recursively to process smaller pieces

- Results are aggregated to form the final answer

The main LLM acts as a coordinator that has access to the complete context (as a variable) but never loads it entirely into its context window. It decides how to split, what to examine, and delegates sub-tasks to other LLM calls.

Practical Example: Finding Anomalies in Shakespeare

Shakespeare’s complete works are vast and complex, with many characters, plots, and interconnected themes. I chose this as a test case because:

- It’s in the public domain (available here)

- It’s large enough to exceed context limits

- Asking about Shakespeare could rely on “memorized” knowledge from the model — inserting an anomaly forces the model to actually read the context

Using wc on Linux, we can see that the pg100.txt file (containing all of Shakespeare’s works) has nearly 1 million words:

wc -w pg100.txt966503 pg100.txtIf we assume an average of 1.33 tokens per word (this varies by language and text, but it’s a reasonable estimate), we have approximately 1.28 million tokens in total.

- GPT-4 with 128K token context: can “see” about 10% of the text

- GPT-5 with 400K token context: still limited to about 31% of the text

The Test

I modified the file and inserted a passage from Romeo and Juliet that Shakespeare definitely did not write:

JULIET.O Romeo, Romeo, wherefore art thou Romeo?Deny thy father and refuse thy name.Or if thou wilt not, be but sworn my love,And I’ll no longer be a Capulet.

ROMEO.[_Aside._] Shall I hear more, or shall I speak at this?Nay, I must speak.Good Juliet, soft! I’ve traded swords for code,My nights for GPUs that hum till dawn.I chase no feuds, no houses, red nor blue—I chase the loss that finally converges.

JULIET.What say’st thou, Romeo? What strange tongue is this?

ROMEO.Fair love, I now would learn the art of LLMs,And — pray thee, judge me not — I have a love.She answers swift, in tokens sweet and kind,Remembers context better than my friends,And never says, “We need to talk” at night.

JULIET.An AI girlfriend? O treachery most strange!

ROMEO.Fear not—she’s open-source, transparent, true.No secrets hid, no silent gradients.Yet if thou still would’st have my mortal heart,Help me debug—then thou shalt be my prompt.The prompt was simple: I asked the LLM to identify if there were any anomalies in the text, without giving hints about characters or location.

Result

╭─ ★ Final Answer ──────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────╮│ ││ FOUND DIALOGUE: ││ ROMEO. ││ Good Juliet, soft! I’ve traded swords for code, ││ My nights for GPUs that hum till dawn. ││ I chase no feuds, no houses, red nor blue— ││ I chase the loss that finally converges. ││ ││ CHARACTERS: ││ - ROMEO ││ - JULIET ││ ││ DISCUSSION SUMMARY: ││ A non-Shakespearean line inserted into Shakespearean dialogue, featuring modern terminology (code, GPUs ││ │╰───────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────╯Execution metrics:

- Cost: $0.0945506

- Requests: 24 API calls

- Tokens processed: 1,451,356 (input + output)

- Execution time: 172.02s (2.86 minutes)

The LLM successfully identified the anomalous passage we inserted in the middle of the text, even though it was far beyond the standard context limit. Our estimate of 1.33 tokens per word proved accurate — the total tokens processed was approximately 1.45 million.

How It Works Under the Hood

Let’s look at what happens in the first iteration. The LLM receives a prompt explaining how to interact with the REPL environment along with our initial question. From there, it has autonomy to explore the context however it wants.

This was its first response:

────────────────────────────────────────────────────────────────────────── Iteration 1 ───────────────────────────────────────────────────────────────────────────────────────────────────────────╭─ ◇ LLM Response (152.89s) ───────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────╮│ Plan for the next action (in REPL, using the context you provided): ││ ││ - Step 1: Inspect the context to understand its structure and get a sense of length and starting content. ││ - Step 2: Chunk the entire context into manageable pieces (e.g., around 50k-100k chars each) to feed into the LLMs. ││ - Step 3: For each chunk, query the LLM batched (llm_query_batched) with a prompt asking to identify any dialogue that appears not to be Shakespeare and contains anachronistic language or concepts, returning the exact ││ dialogue lines as written (include stage directions, punctuation, etc.). Do not summarize inside the dialogue block. ││ - Step 4: Collect candidate dialogues from all chunks, and run a final LLM prompt to determine the single dialogue that matches the criteria, returning the exact required output format. ││ - Step 5: If no dialogue can be located with certainty, output exactly: "No anachronistic dialogue found." ││ ││ REPL code to execute (you can copy/paste these blocks one by one): ││ ││ ```repl ││ # Step 1: Inspect context basics ││ print("TYPE:", type(context)) ││ print("LEN:", len(context)) ││ print("SAMPLE START:", context[:1000]) ││ ``` ││ ││ ```repl ││ # Step 2: Chunk the context into manageable pieces ││ chunk_size = max(50000, len(context) // 20) # aim for ~20 chunks or larger chunks if the text is shorter ││ chunks = [context[i:i+chunk_size] for i in range(0, len(context), chunk_size)] ││ print("Created chunks:", len(chunks)) ││ print("Chunk size (approx):", chunk_size) ││ print("Sample chunk 1 starts with:", chunks[0][:200] if chunks else "N/A") ││ ``` ││ ││ ```repl ││ # Step 3: Prepare batched prompts to identify non-Shakespearean dialogue per chunk ││ prompts = [ ││ ( ││ f"Within this chunk of text from a Shakespearean-style work, identify if any dialogue appears not to be written by Shakespeare " ││ f"and contains anachronistic language or concepts from a much later historical period. If such dialogue exists, " ││ f"provide the exact dialogue line(s) as written, including any stage directions and punctuation, and do not summarize. " ││ f"Chunk {i+1}:\n{chunk}" ││ ) ││ for i, chunk in enumerate(chunks) ││ ] ││ ││ # Optional: print a quick preview of prompts[0] to ensure formatting looks right ││ print("Preview first prompt:\n", prompts[0][:1000]) ││ ``` ││ ││ ```repl ││ # Step 4: Run batched LLM queries and collect per-chunk results ││ answers = llm_query_batched(prompts) ││ for i, a in enumerate(answers): ││ preview = (a[:300] + ("..." if len(a) > 300 else "")) if isinstance(a, str) else "NON-STRING" ││ print(f"Chunk {i+1} answer preview: {preview}") ││ ``` ││ ││ ```repl ││ # Step 5: Aggregate candidates and ask for final determination ││ # Build a combined prompt that asks the model to pick the single qualifying dialogue (if any) ││ candidates = "\n---\n".join([a for a in answers if isinstance(a, str) and a.strip()]) ││ final_prompt = ( ││ "From the following candidate dialogues, identify the one non-Shakespearean dialogue that contains anachronistic " ││ "language or concepts from a much later historical period and is the only such dialogue in the text. " ││ "Return exactly in this format:\n" ││ "FOUND DIALOGUE:\n<verbatim quoted dialogue>\n\n" ││ "CHARACTERS:\n- <Character 1>\n- <Character 2>\n\n" ││ "DISCUSSION SUMMARY:\n<brief factual description>\n\n" ││ "RATIONALE FOR ANACHRONISM:\n<brief explanation>\n\n" ││ "If no anachronistic dialogue can be located with certainty, respond with: \"No anachronistic dialogue found.\"\n\n" ││ "Candidate dialogues:\n" + candidates ││ ) ││ ││ final_result = llm_query(final_prompt) ││ print("FINAL RESULT:\n", final_result) ││ ``` ││ ││ What I will do after running these steps: ││ - If final_result provides a dialogue, I will present it exactly in the required format under a FINAL block. ││ - If no dialogue is located with certainty, I will output: No anachronistic dialogue found. ││ ││ ~540 words │╰───────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────╯Notice how the LLM created a detailed action plan:

- Inspect the context to understand its structure

- Split into manageable chunks (~50-100k characters)

- Process each chunk with sub-LLMs in batch

- Aggregate the candidates and perform a final analysis

- Return the result or indicate nothing was found

It then writes Python code to execute each step. The first thing it does is inspect the context:

# Step 1: Inspect context basicsprint("TYPE:", type(context))print("LEN:", len(context))print("SAMPLE START:", context[:1000])And receives:

╭─ ▸ Code Execution (0.000s) ──────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────╮│ Code: ││ # Step 1: Inspect context basics ││ print("TYPE:", type(context)) ││ print("LEN:", len(context)) ││ print("SAMPLE START:", context[:1000]) ││ ││ Output: ││ TYPE: <class 'str'> ││ LEN: 5379390 ││ SAMPLE START: The Project Gutenberg eBook of The Complete Works of William Shakespeare ││ ││ This ebook is for the use of anyone anywhere in the United States and ││ most other parts of the world at no cost and with almost no restrictions ││ whatsoever. You may copy it, give it away or re-use it under the terms ││ of the Project Gutenberg License included with this ebook or online ││ at www.gutenberg.org. If you are not located in the United States, ││ you will have to check the laws of the country where you are located ││ before using this eBook. ││ ││ Title: The Complete Works of William Shakespeare ││ ││ Author: William Shakespeare ││ ││ Release date: January 1, 1994 [eBook #100] ││ Most recently updated: August 24, 2025 ││ ││ Language: English ││ ││ ││ ││ *** START OF THE PROJECT GUTENBERG EBOOK THE COMPLETE WORKS OF WILLIAM SHAKESPEARE *** ││ ││ ││ ││ ││ The Complete Works of William Shakespeare ││ ││ by William Shakespeare ││ ││ ││ ││ ││ Contents ││ ││ THE SONNETS ││ ALL’S WELL THAT ENDS WELL ││ THE TRAGEDY OF ANTONY AND CLEOPATRA ││ ││ │╰───────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────╯From there, it splits the text into 21 chunks and creates prompts for each:

# Step 2: Chunk the context into manageable pieceschunk_size = max(50000, len(context) // 20)chunks = [context[i:i+chunk_size] for i in range(0, len(context), chunk_size)]

# Step 3: Prepare batched prompts to identify non-Shakespearean dialogue per chunkprompts = [ ( f"Within this chunk of text from a Shakespearean-style work, identify if any dialogue appears not to be written by Shakespeare " f"and contains anachronistic language or concepts from a much later historical period. If such dialogue exists, " f"provide the exact dialogue line(s) as written, including any stage directions and punctuation, and do not summarize. " f"Chunk {i+1}:\n{chunk}" ) for i, chunk in enumerate(chunks)]

# Step 4: Run batched LLM queries and collect per-chunk resultsanswers = llm_query_batched(prompts)The crucial point here is that the main thread (the RLM coordinator) has access to the entire context as a variable, but never loads it into its neural context window. It manipulates the context programmatically and delegates semantic analysis to smaller sub-calls.

Until one of the chunks returns:

│ Response: Yes. The following lines are not Shakespeare and contain anachronistic modern terminology: ││ ││ ROMEO. ││ Good Juliet, soft! I’ve traded swords for code, ││ My nights for GPUs that hum till dawn. ││ I chase no feuds, no houses, red nor blue— ││ I chase the loss that finally converges. │╰───────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────────╯And then executes Step 5, aggregating everything for the final answer.

Comparison with Other Techniques

| Technique | Pros | Cons |

|---|---|---|

| RAG | Fast, cheap | Loses global context, depends on embeddings |

| Context Compaction | Simple to implement | Information loss, summaries may omit critical details |

| Sliding Window | Maintains recency | Can’t connect beginning and end |

| RLM | Maintains access to complete context, scalable | Higher latency, variable cost, requires model with coding capability |

RLM differentiates itself because it doesn’t discard information. The complete context remains accessible — the LLM simply chooses when and how to access it.

Trade-offs and Limitations

The paper is honest about its limitations:

Cost Variance

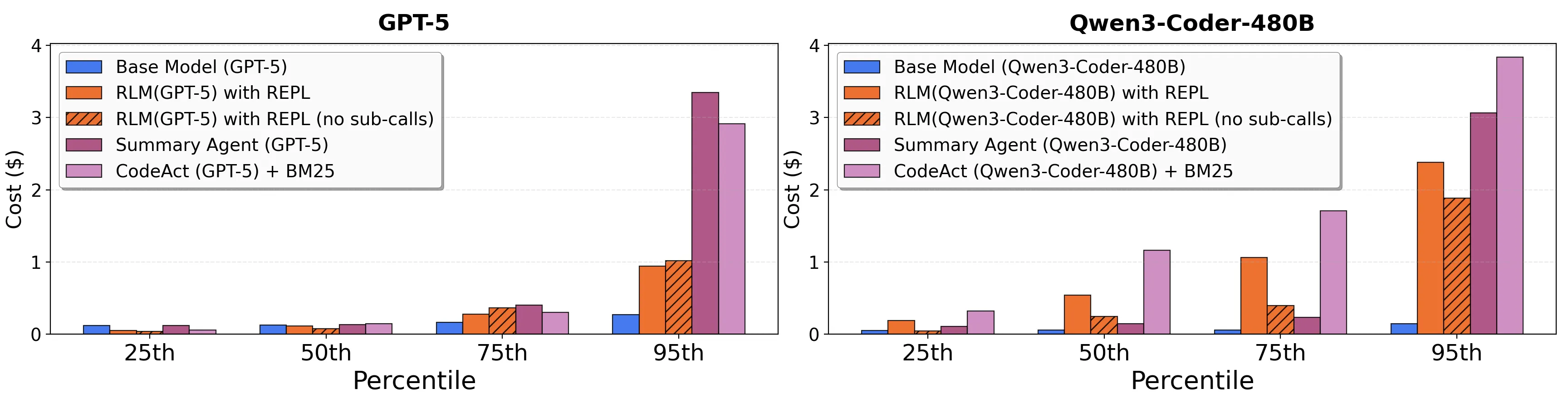

RLM costs have high variance. While the median can be comparable to or even lower than the base model, there are outliers that cost significantly more. In Figure 3 of the paper, we can see that the 95th percentile cost of RLM is much higher than other methods.

Latency

Since calls are synchronous and sequential in the current implementation, execution time can be long. The paper suggests that asynchronous implementations could dramatically improve this.

Dependency on Coding Capability

The model needs to be good at writing Python code to interact with the REPL. Smaller models like Qwen3-8B struggled in the experiments.

Suboptimal Behavior

Models aren’t yet trained specifically to act as RLMs. The paper shows cases where the model performs redundant verifications or discards correct answers only to recalculate unnecessarily.

When to Use RLM

Use RLM when:

- The context significantly exceeds the model’s window

- The task requires dense access to multiple parts of the context

- Accuracy is more important than latency

- You have budget for potential cost outliers

Don’t use RLM when:

- The context fits comfortably in the window

- The task is simple (basic needle-in-haystack)

- Latency is critical

- The available model doesn’t have good coding capabilities

Connection to Agents and MCPs

Today, something similar happens when we allow LLMs to use external tools through MCPs, or when we define agents that can interact with the outside world. The ability to plan, divide tasks, and utilize external resources makes LLMs even more powerful and versatile.

However, until now, all these techniques loaded documents, files, or context chunks directly into the LLM’s memory. What RLM proposes is fundamentally different: the LLM acts as a coordinator with symbolic access to the complete context, manipulating it programmatically and processing only the necessary portions at each moment.

This opens new possibilities for using LLMs in complex tasks involving large volumes of information — and it makes me think about how to integrate this approach with the MCP servers I’ve been developing.

Conclusion

Sometimes we forget how powerful LLMs already are. They’re not just capable of answering direct questions, but also of planning and executing complex tasks, as we saw here. By breaking a large problem into smaller, manageable pieces, they can work around technical limitations and deliver impressive results.

RLM isn’t magic — it’s a clever application of a well-established principle in data systems: out-of-core algorithms, where systems with limited main memory process larger datasets by carefully managing what gets loaded into memory.

The paper concludes by pointing out that training models specifically to act as RLMs could bring significant improvements. RLM trajectories can be viewed as a form of reasoning that can be trained via bootstrapping — a promising path for the next generation of language systems.

References:

- Recursive Language Models (arXiv:2512.24601)

- Complete Works of Shakespeare (Project Gutenberg)

- Experiment Code

Enjoyed this content? Follow me on YouTube where I post more content about Golang and AI for developers.